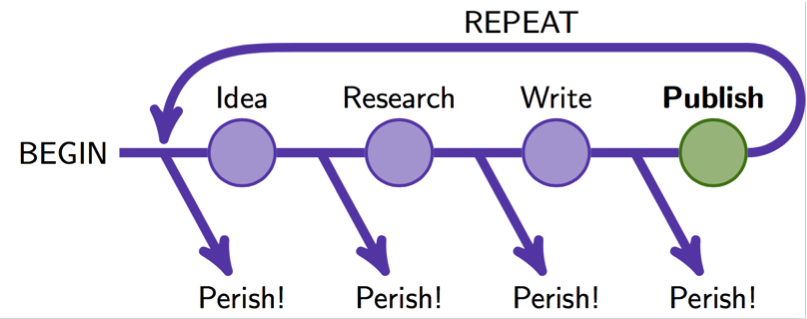

Academia is a tough career choice. The pay is low (especially for graduate students), the hours are long, and the job market is uncertain. Those entering the field often receive this simple advice — “publish or perish.” Publications are the central method by which people are evaluated in academia. One either continually publishes papers, ideally before other researchers working on similar topics, or watches as their career tanks. They may miss out on a job, fail to secure resources for their research, or get passed over for tenure. In fact, a host of tools and metrics which let scholars evaluate their publishing success has developed alongside this pressure to publish in prestigious journals. These tools can track the simple number of publications, to the citation count of a paper (how many other papers cite it), to the journal impact factor, even to the convoluted h-index metric.

The arcane details of all these metrics are of little interest to anyone not seeking a career as a scientist or other academic, but what should matter to everyone is how the incentive to publish no matter what can lead to bad science.

First, more publications may not be better. Having lots of publications is the best strategy for a scientist to accrue lots of citations, but the effect on science as an enterprise is less clear. Some evidence indicates that fields flooded with papers can become sluggish and slow to respond to innovative findings. It is also possible that the deluge of publications is gumming up the works, increasing the time it takes to get research out into circulation, although the evidence on this is unclear. From the researcher’s perspective, the additional effort needed to keep up on advancements in one’s field can be detrimental enough on its own. Finally, there are concerns that publication pressure encourages “salami slicing” of work, where scientists split their projects into as many separate publishable parts as possible. This is inefficient, annoying, and potentially misleading (depending on how the separated parts of a project actually relate to each other).

Second, publishing is time-consuming and expensive. There can be a myriad of fees involved in submitting a paper, from simple submission fees, to article processing charges (required to make articles freely availability in otherwise pay-walled journals), to color printing fees for paper journals. Additionally, all these papers have to be read by editors and peer reviewers, who are usually academics volunteering their time, rather than being paid employees. Every minute spent reviewing a paper is time spent away from one’s own research (or family, or hobbies, etc.). And with the need for ever more publications, has come ever more scientific journals to publish in. The cost of paying for access to the burgeoning number of journals is an increasing financial burden for universities, or for the researcher themselves in cases where no institutional support is available. This financial burden ironically includes buying access to papers published by the researchers the university itself helps to fund — often with public money. Recently, California’s UC system grew so frustrated with the rising costs of paying for the “privilege” to access their own scholarship that they ended their subscription with academic publishing titan Elsevier.

Finally, the publish or perish mindset incentivizes problematic research practices. In the most egregious cases, scientists have engaged in academic fraud, plagiarism, or stealing the work of coworkers and graduate students to ensure a hefty record of publications. Data indicate that there are far more borderline results, that is, results that just barely squeak through disciplinary standards, than would be expected by chance alone and that the pressure to publish may be biasing findings in the direction of publishable results rather than correct ones. Data interpretation can be as much an art as a science, and with enough massaging, investigators can often eke out a “finding” whether it’s really there or not. Not all of this is as malicious as outright fraud or deceitful interpretation; much of the time it is simply scientists looking for something — anything — to make out of their data. Unfortunately, the more scientists look the more likely they are to find patterns in data, even if those patterns just happened by chance. Less extreme, but still concerning, is the selection of “safe” topics to research (those which ensure publication), or sending rejected papers to different journals without meaningfully responding to peer review, in the hopes that one eventually stumbles on a less attentive reviewer.

To resolve these darker implications of the publish or perish mindset will require careful investigation of science as a social and institutional structure. Indiana University is a hub for this type of work. It has a strong tradition in sociology of science — which investigates science as a social system — and houses one of the nation’s only History and Philosophy of Science Departments. The Center for Complex Networks and Systems Research is also heavily involved in the growing field of science of science. The research done at IU in these fields, like the development of programs to share and analyze vast amounts of data on citation patterns, aims to help scientists understand, and improve, the operation of the scientific community.

Edited by Clara Boothby and Taylor Nicholas

great article!