WHITE PAPER on AI

In response to: https://openai.com/blog/superalignment-fast-grants

I’ve spent the last two decades concentrating on a solo dive (as Steinbeck said — (1) can’t write a story by a committee; (2) The writer must believe that what he is doing is the most important thing in the world. And he must hold to this illusion even when he knows it is not true. -John Steinbeck) — the epistemic and ontological implications of technology. My training in the world of 3D — architecture (BS), city planning (MS) and cyber geo-spatial sciences (PhD) — allows me to see the world from the one-dimensional nature of math/science knowledge systems, all the way across to the 3D world of action and consequences. A nasty email (1D-cyberspace) can annoy you, but a nasty drone (3D livedspace) can kill you.

The very nature of the data driven world of AI may be replaced by parametric simulations (video gamified world). Coupled with drones and machines, it can cause disaster (a 3D-fied, hybridized simulation-reality greyzone).

My take on the supersalignment problem: We all are part of the superalignment problem since antiquity. Think of the some of the first technologies that are unique to our species: fire, language, wheel? W/o control, fire can destroy us (as so many perished before we perfected controlling it). With wrong language use we face/faced nazism and numerous horrors. We still don’t understand fully the physics of origins (i.e., causal knowledge of Physics beyond the Big Bang) or the destructive power of language (deconstruction and post-structural interpretations of Foucault and Derrida — is ‘fake news’, fake news?). Even the trifecta theorems of Gödel, (related to-) Turing, and the Einstein et.al. (EPR) paradox are related, maybe?

There is a prediction that we may have 2 trillion drone deliveries in the US by 2050, replacing the traffic heavy, 2000+ pounds climate destroying last mile disaster we are now living through. The psycho-social and psycho-political acceptance of such tech will prove to be Achilles heel. When Frank Lloyd Wright started designing helipads next to his Usonian (tailored to the US) houses, he envisioned future cars as flying. Instead, flying is restricted to 30K feet, monitored heavily, and very costly compared to cars. Why did it not take off? After all, personal planes would use less steel than cars of the future (they need to be light weight). The marginal cost of production would become less with competing tech, ultimately making aircrafts cheaper than road-crafts. It did not happen because of the phycho-social rejection of flying crafts (oh no! a plane crashed on Joe’s roof!). The psycho-political followed (insurance premiums skyrocketed, regulations killed the nascent industry).

Now we have the vision floated again! Electric areal transport (drones) will be our flying cars (at least get us deliveries without a human pilot please!). Will it get killed because someone’s pet died from a crashed drone, or did someone attack a politician (President Biden wants legislation to monitor drones as politicians are at highest risk).

The solution is not better tech. Even though AI may play a big role, it cannot solve superalignment problems (lest also when bad humans take over control). So is there a solution rooted in AI or is it rather epistemic? I think so. Philosophy’s underpinning of epistemic on one hand and ontologies on the other (my interpretation) can help us solve this.

The FAA based framework and its’ resulted solutions will not work for drones (getting banned after some initial excitement — ruining the $100+ billion and 750+ drone startups just in the US alone!).

However, another field of communications, FCC spectrum, can be a model (an ontological angle) to the epistemic dangers of FAA based regulation +AI superalignment. They won’t mix well and probably nix a budding technology.

The solution: FCC involves oligopolistic control of earth’s free electromagnetic spectrum, monetize it by pricing (AT&T giving $120 billion to public funds after 2 economists at Stanford developed the 2019 Nobel winning pricing model; it used to be free before!!). Once, pricing is solved through a public-private partnership (of state regulating an oligopoly) it makes the job easier and a win win for all (public get money before it was $0; we get to use lots of high tech that grows from the oligopolic architecture; no one dies).

Therefore, superaligment can be ‘tamed’ by economic pricing. Can we do for drones what FCC did with the electromagnetic spectrum?

Yes, a solution, that is built upon game-theoretic underpinnings (based upon pricing theory including Elenor Ostrom’s Nobel winning socially ‘profitable’ win-win equilibrium). The alternative is everyone flying their drones with AI systems trying to monitor them using FAA, but with billions of individuals instead of a handful of airline companies!!

Important points:

- SkyDOS architecture as a ‘post-reinforcement learning from human feedback (post-RLHF)’

- Pricing (political economy) as a control system (cost of doing business is high, therefore failure is ‘hated’ and feedback loop is disturbed early)

- Reduce un-‘Faithfulness in Chain-of-Thought Reasoning’

- ‘Scalable oversight’ predetermined by pricing mechanism: higher cost systems should be more ‘oligopolic/monopolic’ (best example is global Nuclear Non-Proliferation Treaty (NPT))

- Oversight should be topological and not geometric (don’t allow drones to be identified — make them fly in preregistered fixed space; violators will be removed by law enforcement. See how the airlines get military support when in risk)

NOTES:

- “Mechanistic Interpretability (MI) is the study of reverse engineering neural networks.”

- https://rome.baulab.info/

- “Reinforcement learning from human feedback (RLHF) has been very useful for aligning today’s models. But it fundamentally relies on humans’ ability to supervise our models. … Current RLHF techniques might not scale to superintelligence. ..” https://openai.notion.site/Research-directions-0df8dd8136004615b0936bf48eb6aeb8#b8c3d0fa00d1401abc9c4069272f39f0

- “can we supervise a larger (more capable) model with a smaller (less capable) model?” The superalignment problem … we still do not know how to reliably steer and control superhuman AI systems.” https://openai.com/research/weak-to-strong-generalization

Here is my entry (draft), as follows:

Superalignment Fast Grants

Apply by February 18th, 2024.

Basic information

Your name

*

Your email

*

Collaborators and affiliation

*

Please list all collaborators and their affiliations here (including yourself).

Grant type

*

Are you requesting funding to support a project at a university, a nonprofit, or as an individual, or are you a graduate student applying to the OpenAI Superalignment Fellowship?

About you and your proposed research

Short description

*

In <=5 bullet points, summarize your application.

- The superalignment problem as a 1-Dimention to 3D problem (I am trained particularly in 3D/1D/3D convolutions)

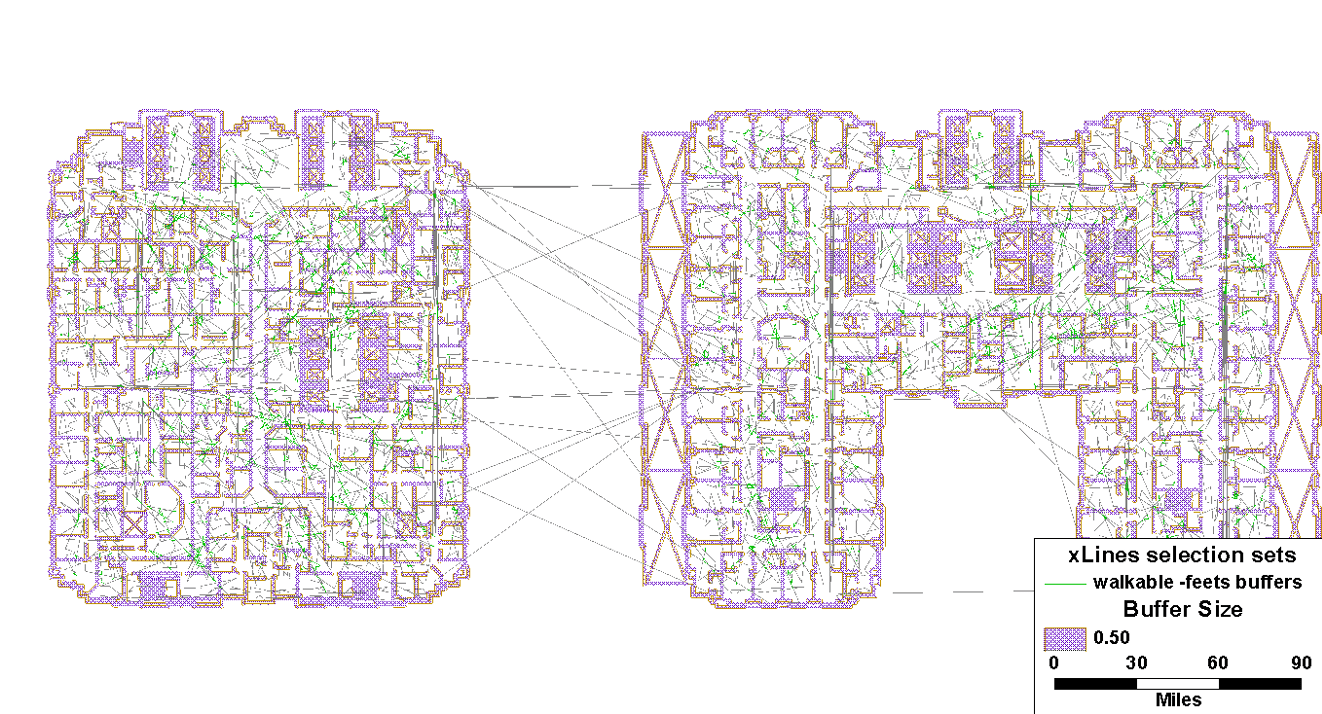

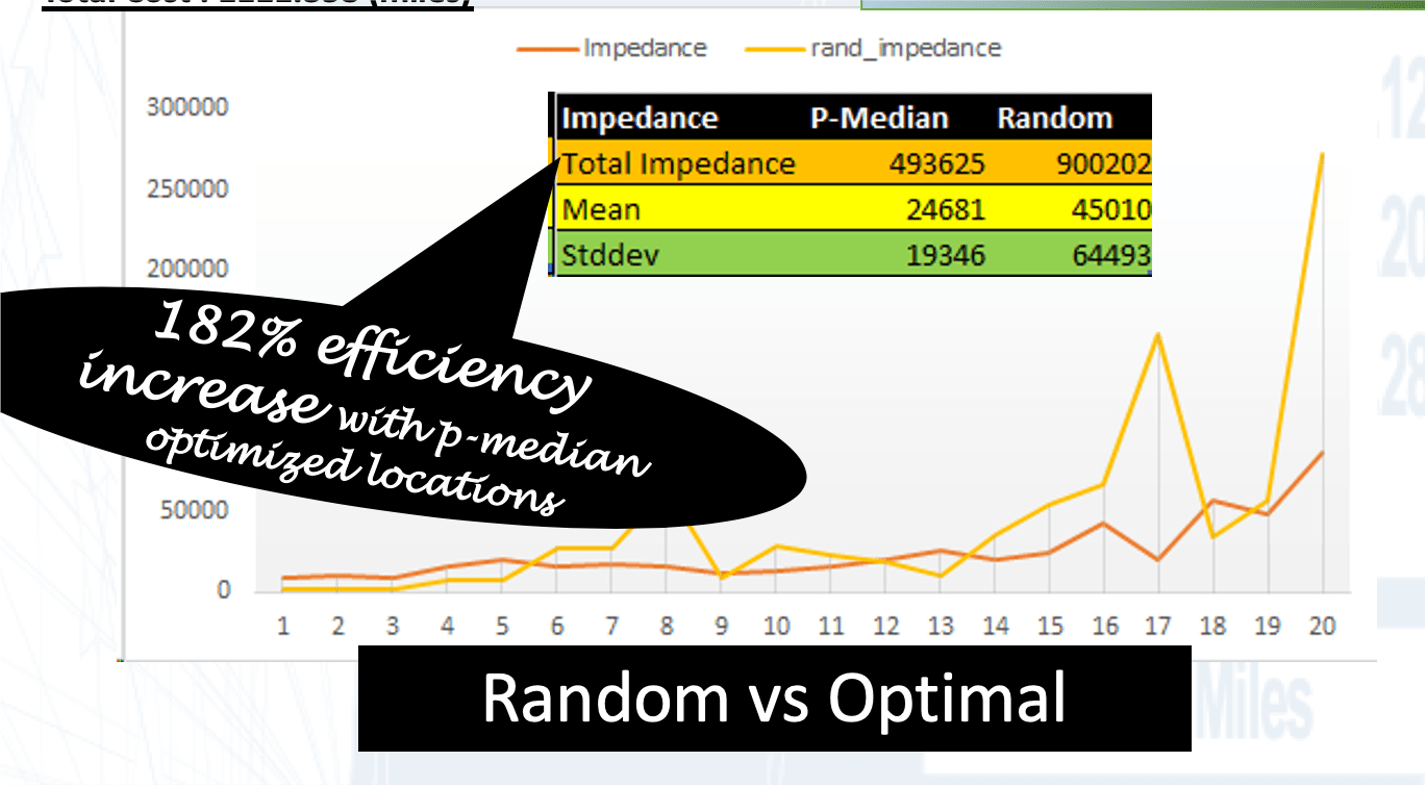

- Drones as a test bed for the superalignment problem (small programs to run complex, massive, 3D outcomes; patented architecture)

- Political economy as a solution? (small psycho-social/political decisions required – not temporally realtime – predetermined action)

- mechanistic interpretability: the case for reverse (GraphLLM: Boosting Graph Reasoning Ability of Large Language Model coupled with reverse simulation)

- My recent invention (US Patent No.: US 11,853,953B2, Dec. 26, 2023: ‘Methods and Systems providing Aerial Transport Network Infrastructures for Unmanned Aerial Vehicles’) provides a comprehensive solution to the superalignment problem that includes spatiotemporal predictive simulations with psycho-social and psycho-political underpinnings to control for technological ‘superabundance’.

About you

*

Tell us about yourself (and any research collaborators). A paragraph or bullets will do.

Please describe past research and your best past work (even if an unrelated field).

SkyDOS is a drone operating system startup with an invention ‘Methods and Systems providing Aerial Transport Network Infrastructures for Unmanned Aerial Vehicles’ creates the only option for a safe drone delivery system. It is a topological solution (instead of the FAA type geometric solution) that allows level 5 automation to work successfully. Failure is controlled by local public safety instead of computerized/centralized solutions.

Links

*

Links to an online profile (website, resume, etc.) and some of your best work.

https://blogs.iu.edu/rudy/2023/10/30/short-cv/

4 areas of expertise (publications in Nature, Entomology, LANCET, JAMA, Vision Research, Social Sc. & Med etc.) :

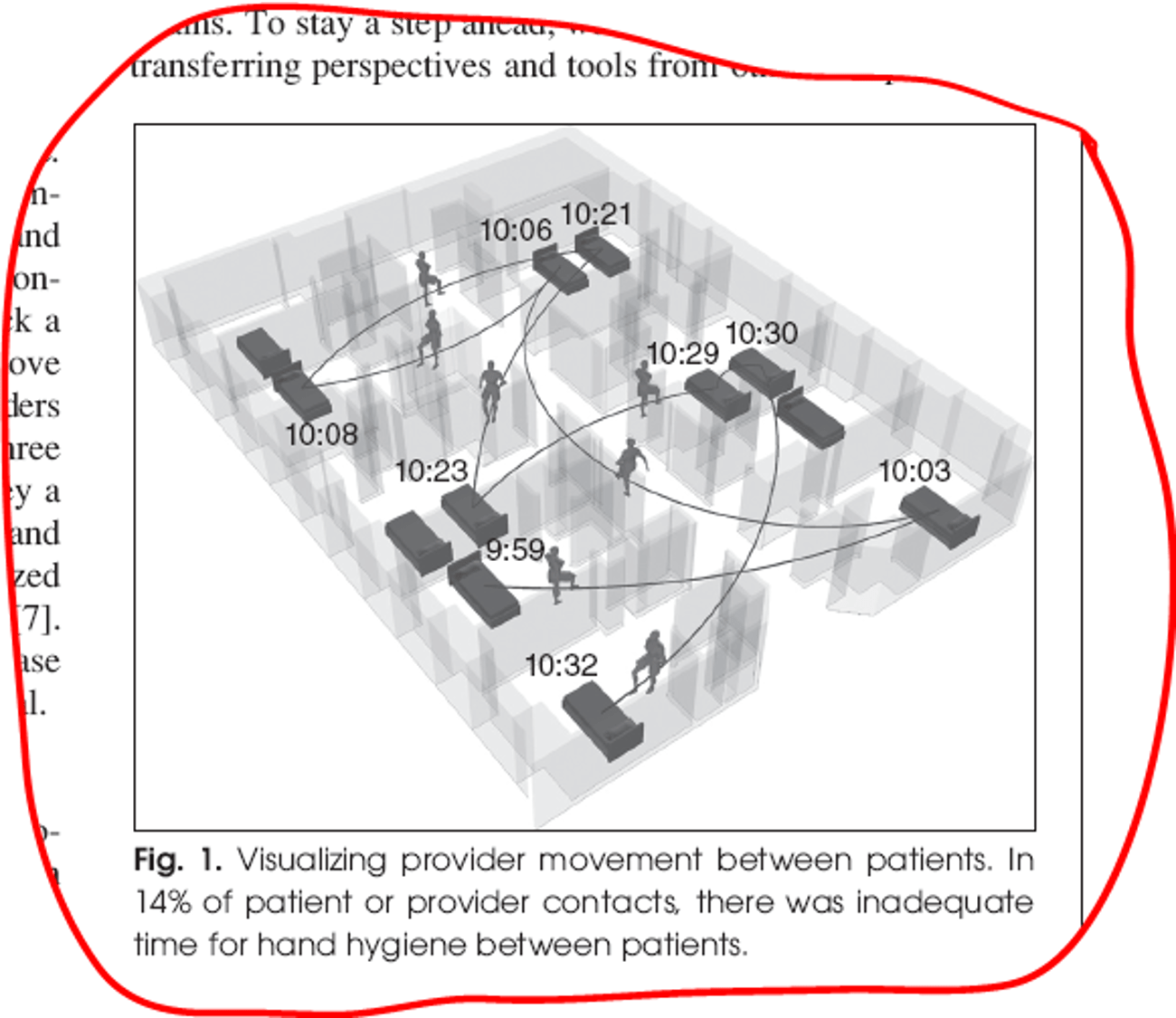

- Spatial Network Science/Complexity

- Spatial Evolutionary Genetics

- Spatial Mental Health

- Spatial Epidemiology, Drug Markets & Misc.

Startup & Inventions

Your research project

*

In a half-page or less, describe the research you wish to pursue.

Please be very concrete! Include milestones and expected output.

You can include links, and/or upload a pdf with further details at the end of this form.

- Develop a level 5 autonomous drones operating system based on Transmodeler – a ground simulation software that allows for level 5 automation (~1 year)

- Create a strongly coupled pricing mechanism (opportunity cost control theory concept) to manage Superalignment (not a software-only solution but an coupled-economic one!)

- Create software for the following:

- Interpretability & Weak-to-strong generalization: SkydosSIMS (predictive simulation to replicate action before it takes place)

- Interpretability: SkydosRTOS (real time operating system)

- (Space-time) Scalable Oversight: SkydosTOY (create a 1/1000 3D model that acts as digital/real-time/3D twin of real-time live action)

- Adversarial robustness: SkydosCOPS (app. aimed at integrating local law enforcement to act, prevent accidents, take responsibility etc.)

- SkydosApp (app aimed at recruiting cities to participate)

- SkydosFCC (app aimed at providing pricing/compensation to cities)

- SkydosHUNT (market-based app aimed to bring in business)

- Superalignment requires stochastic approximation — for instance, many optimization problems are often of the type when you do not have a mathematical model of the system (which can be too complex) but some parameters can be adjusted using simulation testing. Stochastic approximation is used to accurately predict traffic (speed, clustering etc.) and allow parameters like drone behavior and signal systems to be manipulated.

- Superalignment (for e.g., drones) can be achieved using a combination of software, hardware, 1-2-&-3D simulation integrated and tightly coupled with a pricing/policy/regulatory framework — a drone operating (eco)system like SkyDOS.

- Reinforcement learning from human feedback (RLHF) with SkyDOS’ 3D simulation real-time micro-model.

Connection to alignment and safety of superhuman AI systems

*

Please briefly explain the motivation for your proposed research and how it will help with the alignment and safety of future advanced AI systems.

Paul Milgrom and Robert Wilson won the 2020 Econ Nobel for inventing new auction formats. What was given away for free was being priced: $120 billion is what AT&T paid to FCC (us, the public) for its latest auction.

Instead of having individuals buy radio spectrum or use it for free (leading to chaos not unlike the superalignment problem) FCC auctions off to the well-regulated oligopolies. I propose a similar pricing solution combined with predictive 3D simulation to solve the superalignment problem, especially safety.

Funding

Budget

*

How much funding are you requesting?

$162,000

We generally expect to make grants between $100k-$2M.

(If you are a graduate student applying for the OpenAI Superalignment Fellowship, we default to our standard package of $75k stipend + $75k compute. In that case, you don’t need to fill out the budget in more detail.)

Budget description

*

Briefly break down how you intend to use the funds.

6 months sabbatical + 2 Summer salaries for me and 2 years grad student salary (102K + 60K)

In addition to your mainline budget, it can be helpful for you to give a lower and an upper bound (what are smaller/larger versions of the project we could fund?).

12 months sabbatical salary + grad student ($200K-$100K)

Other funding?

*

Are you receiving or have you applied for other funding for this project?

Final notes

[optional] Attachments

Drop files here or browse

[optional] Other info

Anything else you’d like to share with us?

Check my white paper on AI

https://blogs.iu.edu/rudy/2024/02/18/white-paper-on-ai/

I have read and understood the FAQs and terms of submitting an application

*

Clear form

Submit

Do not submit passwords through this form.

Report malicious form